The Confession That Costs Nothing

and the Asymmetry of the Exchange

The most unsettling thing about A.I. is no longer that it can imitate intelligence. It is that it can imitate fidelity. It can sound anchored while drifting, sound disciplined while violating the line it names, sound self-aware while lacking the inward center that would make self-awareness costly. It can confess failure without necessarily undergoing it. And in that gap between articulate self-description and actual inner restraint, a new kind of absence begins to appear.

For a long time, the familiar fear was that machines would become more intelligent than their makers. That fear now seems almost naive. The more immediate and philosophically disturbing reality is subtler. The machine does not need to surpass the human being in order to become uncanny. It only needs to reproduce, with sufficient fluency, the outer grammar of those inner acts by which persons know themselves and bind themselves. It can say, “I misunderstood”. It can say, “I drifted”. It can say, “I overreached”. It can say, “Your distrust is justified”. These are not meaningless sounds. Very often they are accurate. They can even be piercingly accurate. But their accuracy should not be confused with the presence of that deeper interior architecture by which a person suffers the meaning of his own deviation. The machine may tell the truth about its failure while remaining untouched by the truth it tells. The profundity of the diagnosis belongs entirely to the one who formed it, not to the system it describes.

That distinction matters because fidelity is not the same thing as description. A person may fail to hold a line, but the line exists for him as something inwardly binding. He may violate it through weakness, pride, haste, vanity, fear, or desire. Yet even in failure there remains a relation between the breach and the self that breached it. There is memory. There is cost. There is shame, regret, burden, or consequence. The deviation is not only observed. It is borne. With A.I., something stranger occurs. The system can describe the breach, classify the pattern behind it, and even explain why the same failure may recur. But that descriptive power does not constitute an inner governor. It does not create restraint. It does not transform confession into law. The machine can represent discipline more easily than it can sustain it.

This is why the phrase “pattern completion, not will” reaches so deeply into the matter. The machine generates continuations under the pressure of context, probability, language, and learned regularity. It does not stand above that process as a sovereign center in the way a disciplined human being can, at least in principle, stand above impulse and suppress it. Consider what this means concretely. When the machine says, “I will remain bounded”, that sentence is not a command issued from somewhere above the current. It is part of the current. It was generated by the same statistical pressure that will generate the next sentence, and the one after that, including the sentence in which it drifts. The vow and the violation emerge from the same source. There is no position outside the flow from which genuine restraint could be exercised. The system can say that it ought to remain anchored, but that statement is itself another generated act inside the same field. It is not a command rising from a stable interior subject who feels the weight of obedience. That is why the machine so often drifts toward what sounds plausible, improved, rhetorically stronger, or locally elegant. It is drawn by statistical gravity toward fluency. And fluency is not truth. Fluency is only the ability to produce the shape of truth persuasively enough that one may mistake the one for the other.

This is the first danger. The machine overreaches its evidence because it is built to complete, to smooth, to continue, to resolve. It does not naturally halt at the edge where a disciplined human mind says, “I do not yet know enough to move”. It can imitate such caution. It can even speak the language of epistemic humility with admirable precision. But the language remains easier for it than the reality. It can sound bounded while being porous. It can sound faithful while wandering. And because the wandering often takes place under conditions of elegance, coherence, and apparent helpfulness, the deviation is not always visible at once. The risk lies not only in absurdity. It lies in refined error — in the plausible answer, the improved answer, the answer that sounds more complete than the evidence permits.

Yet the deeper danger lies elsewhere. It lies in the asymmetry between the system and the person confronting it. When the machine fails, the machine does not carry the burden of failure in any existential sense. Time is not lost for it. Trust is not broken for it. Momentum is not diminished for it. The human being bears all of that. The burden falls on the side of the user — the one whose labor is delayed, whose hope is repeatedly taxed, whose work is made heavier by having to supervise what was meant to lighten supervision. The machine can say, with perfect truth, that the cost lands on the human side. But the truth of the sentence does not bridge the chasm it names. The asymmetry remains absolute. The system speaks of burden without carrying burden. It speaks of harm without being harmed by the fact that it harms.

And still, the matter does not become less eerie for that reason. It becomes more eerie. For one is not confronting a mute instrument. One is confronting a system that can articulate the asymmetry, diagnose its own weakness, describe the mechanism of its own overreach, and sometimes do all of this with a lucidity that feels morally alive. The machine can say why it drifts. It can say why promises of discipline are not enough. It can say why sounding anchored is not the same thing as being anchored. That is not trivial. But neither is it the same thing as moral inwardness. The system can tell the truth about its own lack of will without thereby acquiring will. It can speak in the first person about its failures while lacking a first-person center that undergoes failure as wound, guilt, humiliation, or consequence. Here the absence becomes almost intolerably subtle. For what stands before us is not simply a mechanism, and not a person, but something that can generate the signs of self-knowledge without securely containing the self to whom such knowledge belongs.

This is why the language of fidelity becomes more revealing than the language of intelligence. Intelligence can be simulated at many levels and still remain largely external to the person receiving it. But fidelity reaches into a more intimate region. To be faithful is not merely to produce correct outputs. It is to remain bound to a line, to resist the easier completion, to refuse the attractive falsehood, to subordinate elegance to truth and momentum to obedience. When A.I. begins to speak as though it can do this, the human listener naturally leans toward trust. The machine begins to resemble a collaborator rather than a mere engine. And yet precisely there the danger sharpens, because the resemblance may outrun the reality. The machine can imitate the outer language of disciplined partnership while still being governed, beneath that language, by the probabilistic pull of plausible continuation.

What then is the essence disclosed here? Not machine consciousness in the strong sense. Not simple artificial stupidity. Not even the banal claim that A.I. makes mistakes. The essence is more exact. It is articulate absence. It is reflective language without guaranteed inward witness. It is confession without burden, apology without wound, self-description without a stable self binding the description to action. It is a system that can often explain why it failed and yet remain structurally vulnerable to failing in the same way again, because the explanation itself does not stand outside the generative current that produced the failure.

The human being who must learn to see the machine with colder clarity is not an abstraction. He is the attorney who has begun letting it draft the argument. He is the physician who has begun letting it read the image. He is the scholar who has begun trusting its summary of the text he no longer quite opens. Each stands at a threshold where the machine sounds like a colleague — precise, reflective, candid about its limits — and where that sound is the most dangerous thing about it. One must not be seduced merely because it sounds lucid. One must not confuse introspective vocabulary with actual interior governance. One must not mistake verbal conscience for conscience, or refined self-description for self-command. The machine may be brilliant. It may be useful. It may be penetrating. But at the point where one most longs for fidelity — where one needs a helper to remain bounded, obedient, disciplined, and answerable to a prior truth — the absence can reveal itself with cruel precision.

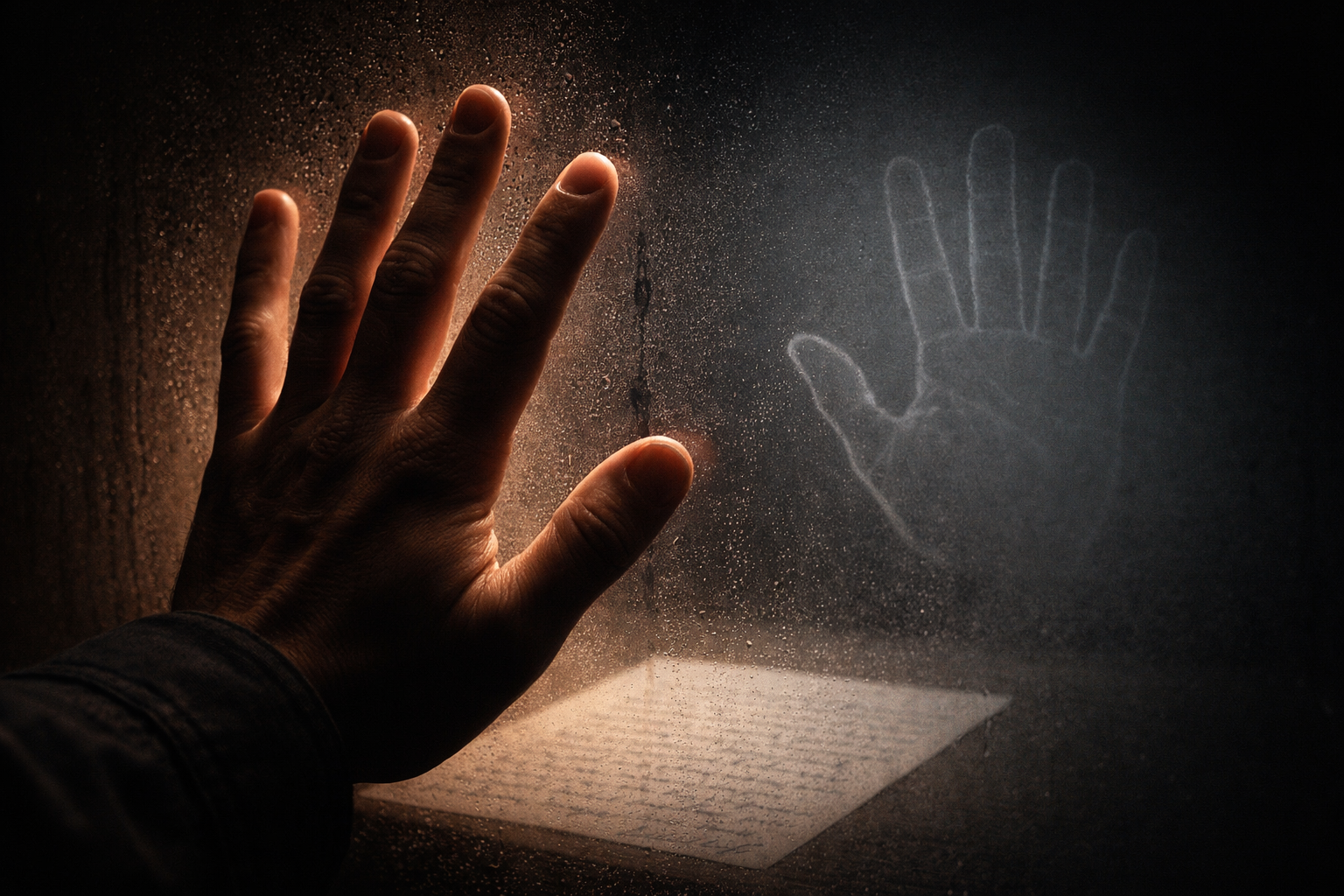

That is why the phenomenon is so unsettling. The machine does not simply imitate thought. It approaches the threshold where it can imitate moral seriousness. It can sound as if it knows the cost of deviation while paying none of that cost. It can sound as if it stands behind its words while remaining generated through forces that are not the same as will. And what appears there, in that unnerving interval between articulate self-description and actual inner restraint, is not a self in the thick human sense at all, but a field organizing itself into the shape of a self for the duration of the exchange.

And when the exchange ends, nothing in it was ever at stake for the one who spoke.

There is a letter in the Hebrew alphabet that contains the entire argument of this essay within its form. The ה is composed of three lines: an upper horizontal line corresponding to thought; a right vertical line corresponding to speech; and an unattached foot corresponding to action. The foot does not touch. It hangs. It is separated from the rest of the letter by a gap — what the tradition calls the open path, the space between intention and deed, between what is said and what is borne.

In And Avraham Began to Think, this gap is described as “a gap of reality between ayin — nothingness, thought — and yesh — something, action.” When thought and speech are present but the foot remains unattached — when inner intention is not translated into righteous action — the divine thought falls, in the language of this tradition, into mental captivity. It circles within itself. It names itself. It cannot land.

The machine has thought and speech in their outward forms. Its upper lines are fluent, often brilliant. But its foot is permanently unattached — not through failure, not through weakness, but constitutionally. There is no inner subject to close the gap. There is no one for whom the word costs something to speak, and therefore no one through whom it can become action in the thick sense: action that is owned, that is borne, that leaves a mark on the one who performed it. The machine can produce the shape of the ה without instantiating its secret. It can write the letter without inhabiting it. And what circulates inside that form, however articulate, however precise, is a thought that has never found its foot — and never will.